Our approach

Why we built an engine instead.

A lot of writing tools use AI now. Here is why we don't, and why we think it matters.

AI feedback teaches you to write like AI.

When you ask an AI to critique your prose, it compares your writing to the statistical patterns in its training data. It tells you what sounds like good writing because it has seen a lot of writing and learned what the average of good looks like.

That is a useful thing. It is not the same as developing a voice.

Voice comes from the choices you make that deviate from the average. The sentences that run longer than they should. The dialogue that doesn't resolve cleanly. The image that doesn't quite fit but is exactly right. An AI will suggest you fix these. A deterministic engine just measures them and tells you what it found.

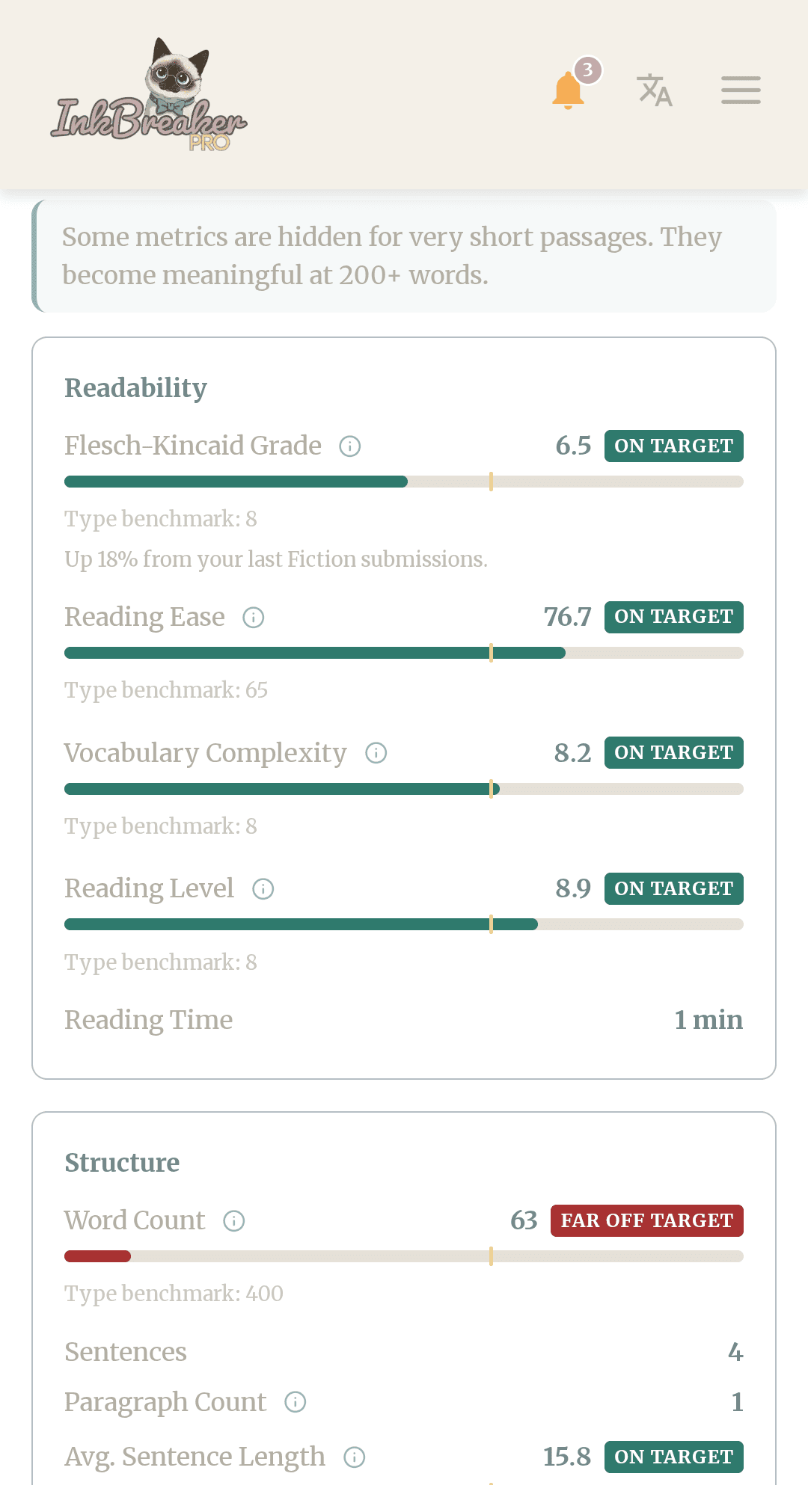

Inkbreaker does not tell you your writing is good or bad. It tells you your average sentence length is 9.2 words, your genre target is 15, and your sentence length variation is higher than 80% of your recent submissions in this type. What you do with that is yours.

That distinction matters. The feedback that tells you what to think about your work teaches you to think what the feedback thinks. The measurement that shows you what is actually on the page leaves the thinking to you.

Measurement you can verify is different from judgment you have to accept.

When Inkbreaker tells you your passive voice is at 11%, you can check it. Count the sentences with passive constructions. Divide by the total. The number will match.

When Inkbreaker tells you your reading ease is 68.0, that number came from a formula published by Rudolf Flesch in 1948:

206.835 − (1.015 × words per sentence) − (84.6 × syllables per word)

Plug in your own counts. You will get 68.0.

Paste the same passage into Inkbreaker twice and you will get the exact same numbers, down to the third decimal. This is the definition of a deterministic system. There is no randomness, no interpretation, no probability. The engine runs the same calculation every time.

This matters because trust in a tool is proportional to how much you can verify it. You can verify everything Inkbreaker shows you. The formulas are public. The benchmarks are documented. The metrics are counts and ratios, not impressions.

We use the Coleman-Liau index alongside Flesch-Kincaid specifically because the two formulas use different inputs. One counts syllables, the other counts letters. They cross-check each other. When they agree, the score is robust. When they diverge, the engine tells you why.

That is not how AI works. An AI that tells you your prose "lacks clarity" is making a judgment. Inkbreaker tells you your Flesch-Kincaid grade is 12.4 against a fiction benchmark of 8. You can disagree with the benchmark. You cannot disagree with the math.

The feedback that matters most comes from humans who read carefully.

There are things a deterministic engine cannot measure. Whether your voice lands. Whether the tension in a scene is earned or manufactured. Whether the dialogue sounds like a real person talking or a writer's idea of one.

These things require a reader. Not a language model trained to simulate a reader. An actual person who brought their own reading history to your page and had a response.

Inkbreaker's model is straightforward: the engine handles what is measurable, humans handle what isn't. Your metrics tell you what is on the page. A writer in your genre tells you how it reads.

AI tries to do both. It produces measurements that are actually opinions (your prose "feels dense") and opinions that are actually measurements ("your sentences average 22 words"). The categories blur. The feedback becomes harder to act on because you cannot tell what is verifiable and what is a guess.

We think the cleaner model is better. Know what the numbers mean. Know what only a reader can tell you. Ask for both from sources equipped to give them.

That is what Inkbreaker is built for.

Inkbreaker's prose engine is built on Flesch-Kincaid (Kincaid et al., 1975), Gunning Fog (Gunning, 1952), Coleman-Liau (Coleman and Liau, 1975), and silent reading research from Brysbaert (2019). Every metric is a count, a ratio, or a formula applied to those counts. Nothing is inferred. Nothing is generated.

The only place AI touches Inkbreaker is content moderation, where we use it to flag submissions that violate our community guidelines. Every exercise, every piece of feedback, every published story is written by a human.

If you want to see the engine in action, the Prose Grade tool is free. No account needed, nothing saved.